This week, the AWS IoT Device SDK for Swift reached general availability. As a member of the Swift Server Workgroup (SSWG), this one caught my attention. The SDK brings production-ready MQTT 5 connectivity, Device Shadow, Jobs, and fleet provisioning to Swift developers on macOS, iOS, tvOS, and Linux.

I’m curious to see what you will build with it. Swift on the server has matured over the past few years, and now it reaches IoT devices too. This connects to a broader trend of running Swift at the edge. WendyOS, for example, is an open-source operating system for physical AI that offers first-class Swift support for deploying apps to NVIDIA Jetson and Raspberry Pi hardware. Between server-side Swift, IoT, and edge computing, the language is showing up in places that would have surprised most people a few years ago.

Now, let’s get into this week’s AWS news.

Headlines

Amazon RDS for SQL Server supports Bring Your Own Media — Customers who migrate SQL Server applications from on-premises environments can now reuse their existing Microsoft SQL Server licenses, including Software Assurance, through Microsoft’s License Mobility program on Amazon RDS. BYOM is integrated with AWS License Manager for tracking license usage and compliance. Read more.

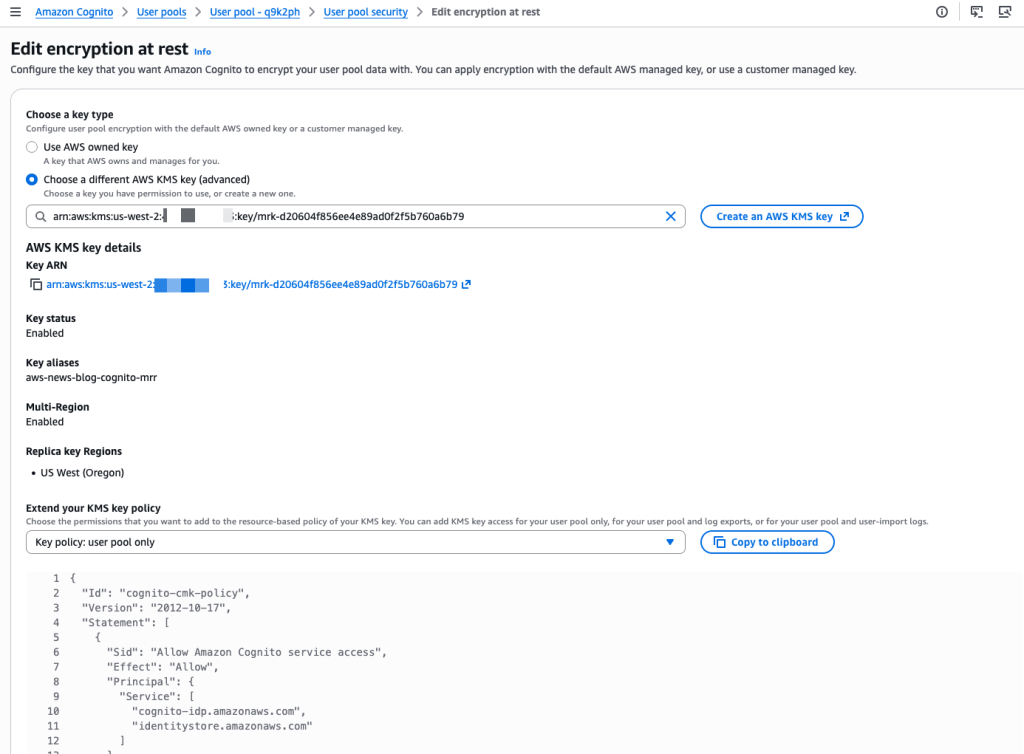

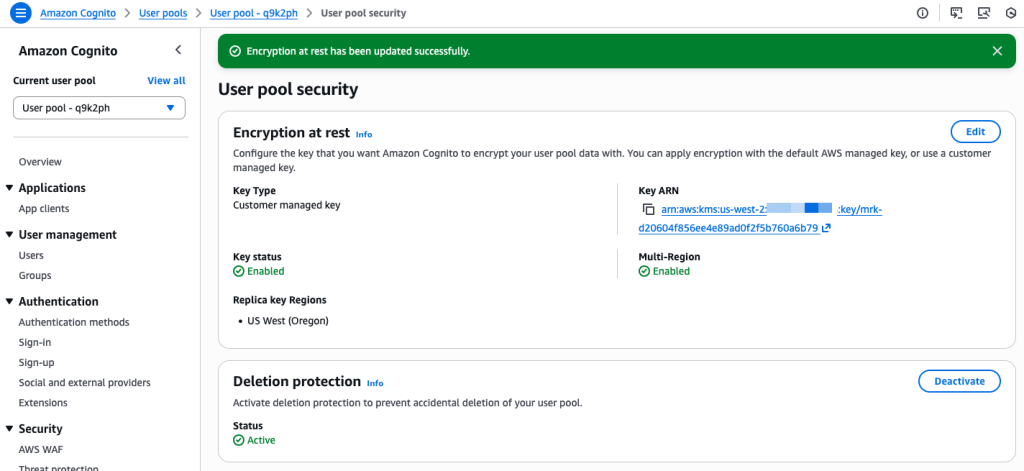

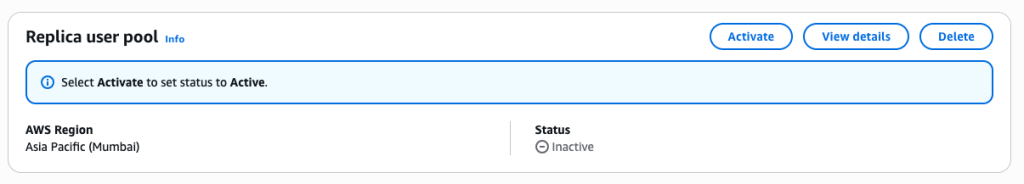

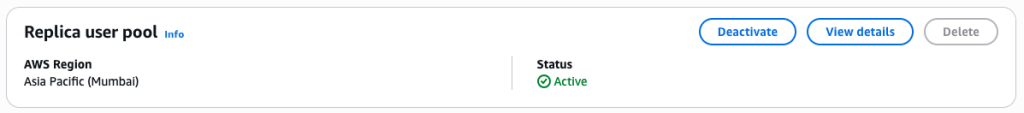

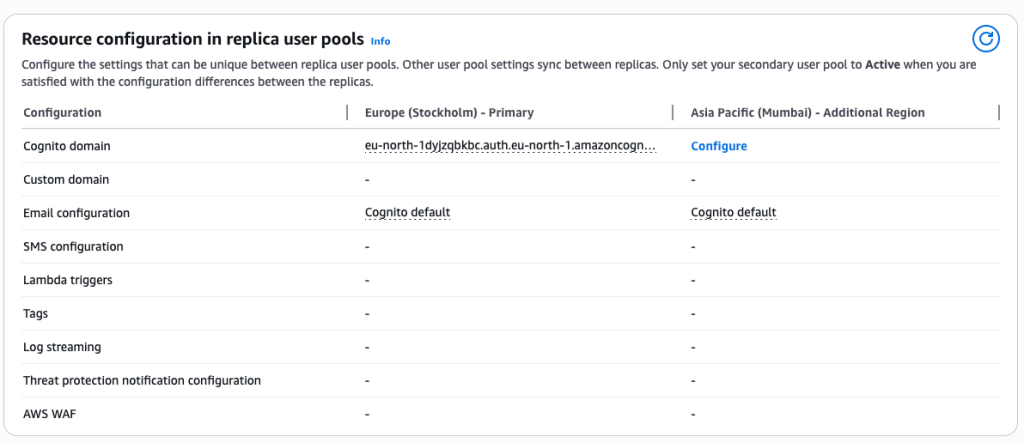

Amazon Cognito now supports multi-Region replication — You can now synchronize user and machine identity data, including credentials, user pool configurations, and federation setups, to a secondary user pool in a standby Region in near real-time. In the event of a disruption in the primary Region, signed-in users continue accessing their applications without re-authenticating, and registered users can sign in with their existing credentials. Multi-Region replication is available as an add-on for user pools in Essentials or Plus feature tiers across 16 Regions. Read more.

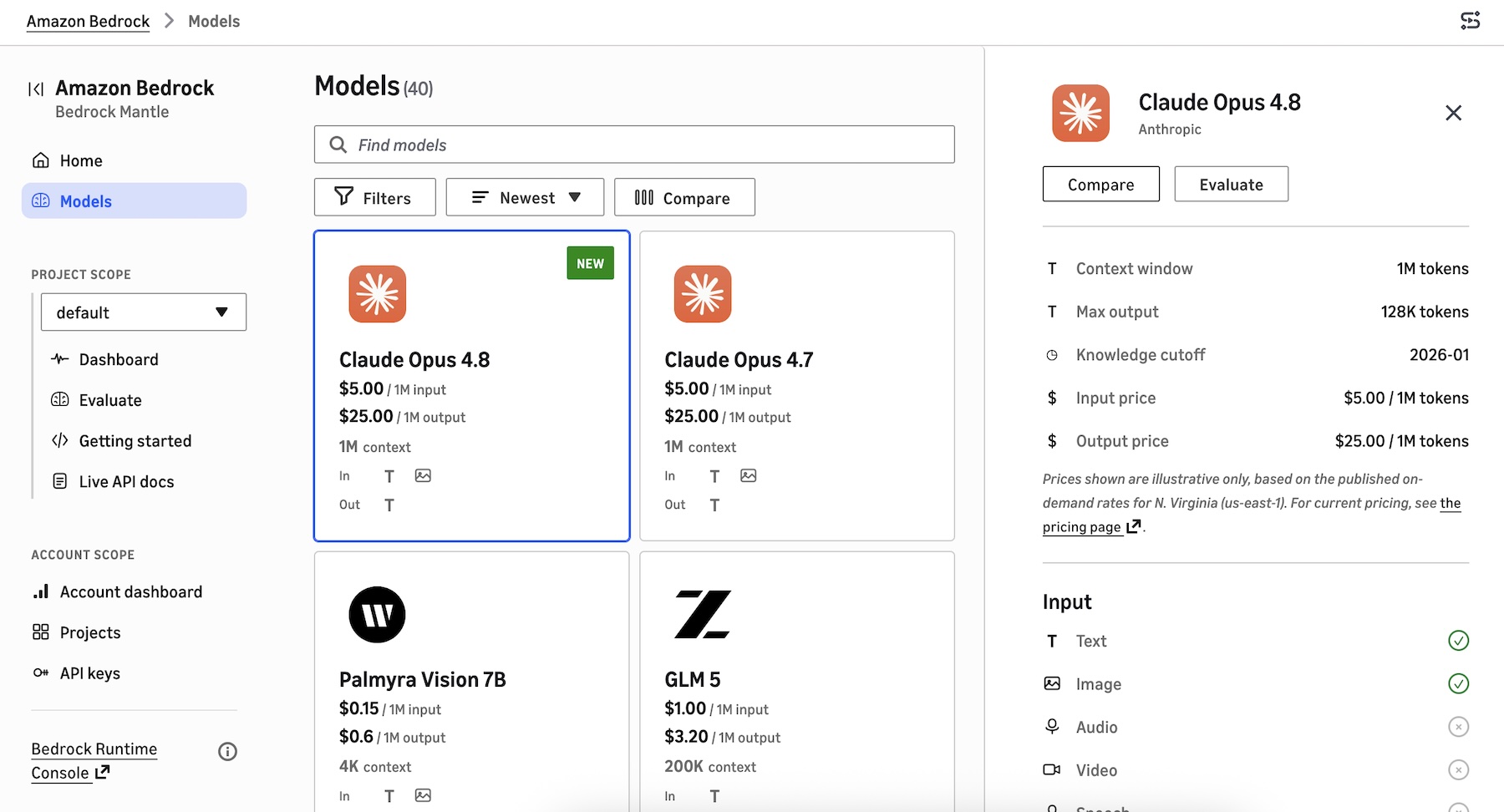

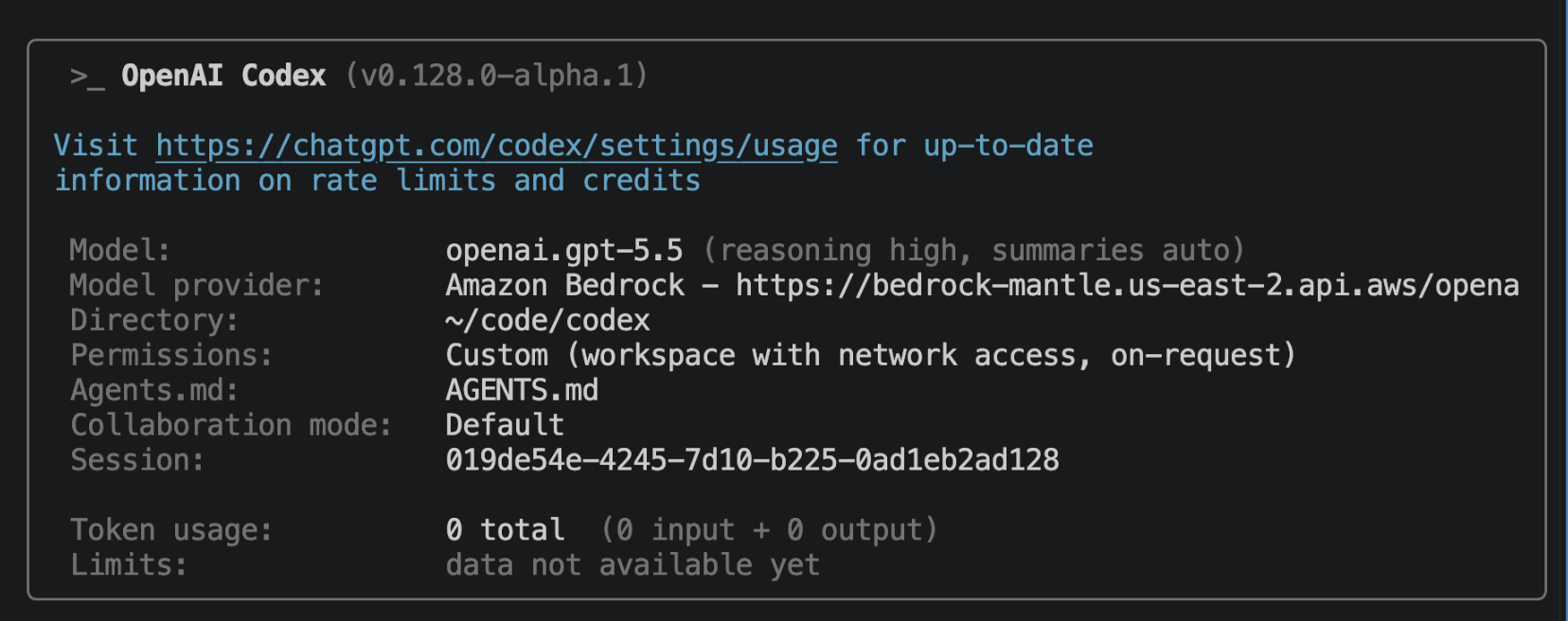

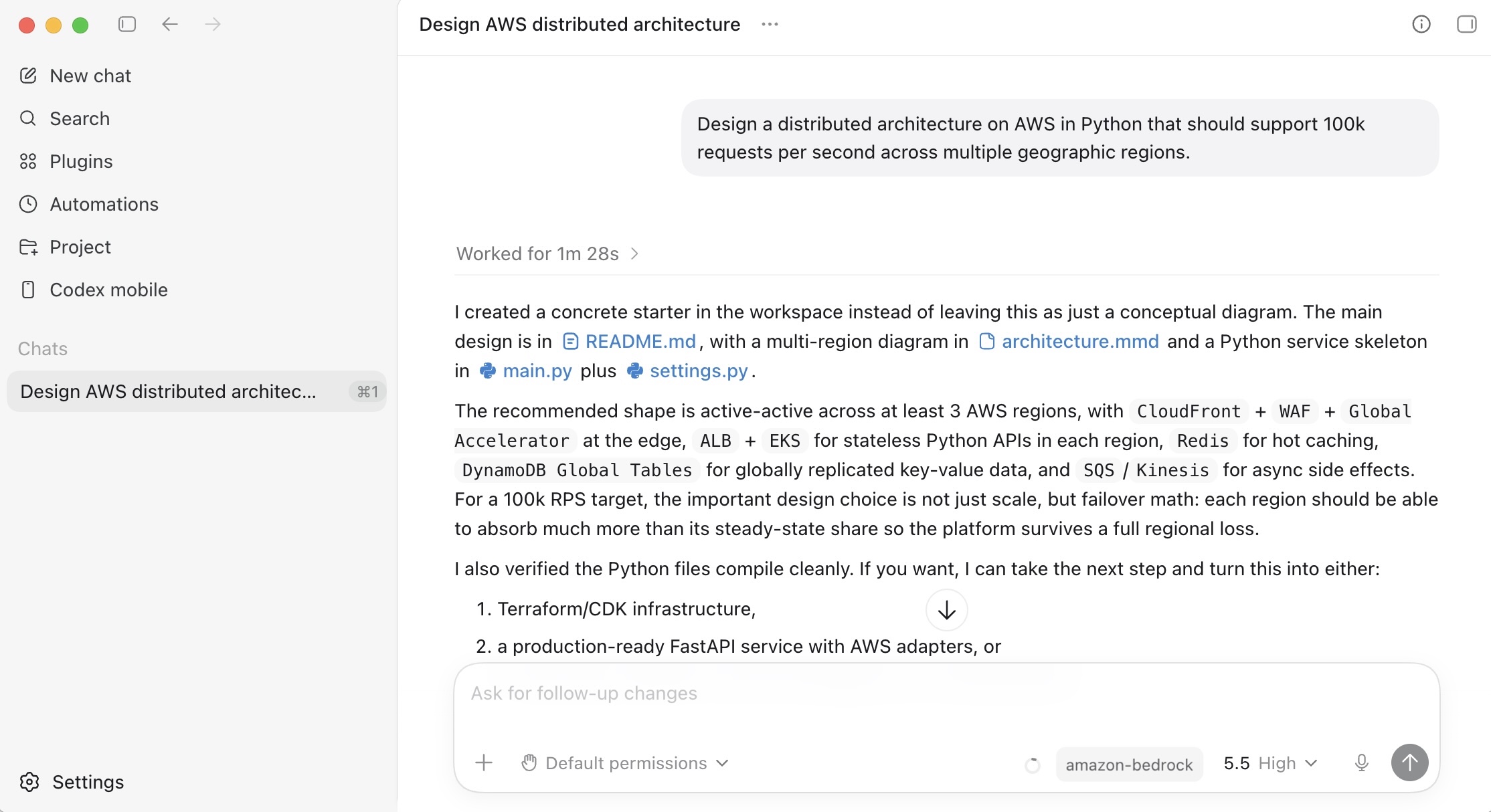

GPT-5.5, GPT-5.4, and Codex from OpenAI are now generally available on Amazon Bedrock — You can now use GPT-5.5 and GPT-5.4 in production workloads on Amazon Bedrock and build with Codex for AI-powered software development, with the same security, governance, and operational controls you already use across AWS. GPT-5.5 is the most capable model from OpenAI, excelling at agentic coding, data analysis, and multi-step autonomous tasks. Codex is available through the Codex App, the Codex CLI, and IDE integrations with Visual Studio Code, JetBrains, and Xcode. Pricing matches OpenAI first-party rates, and usage counts toward existing AWS commitments. Read more.

Last week’s launches

Here are some launches and updates from this past week that caught my attention:

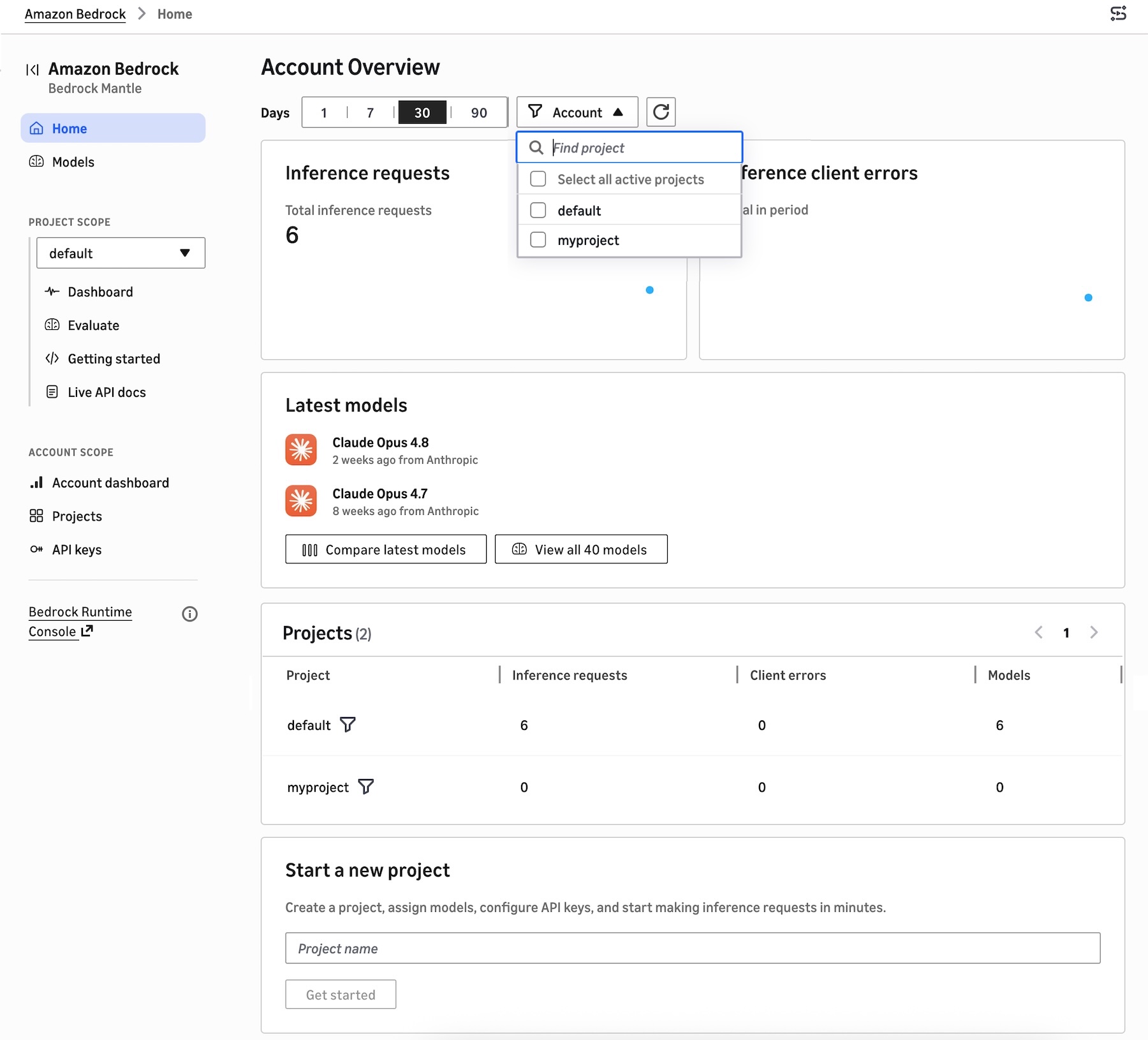

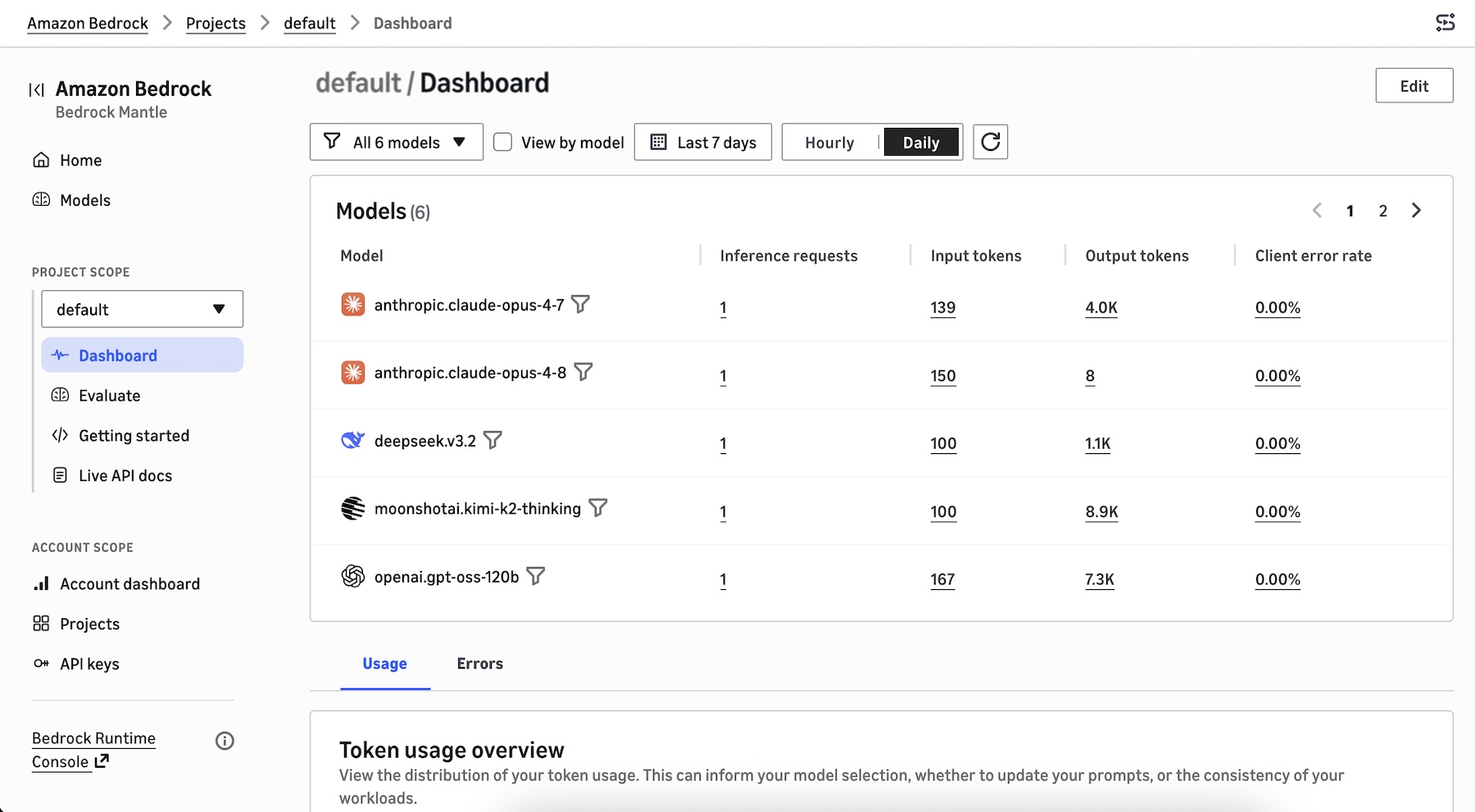

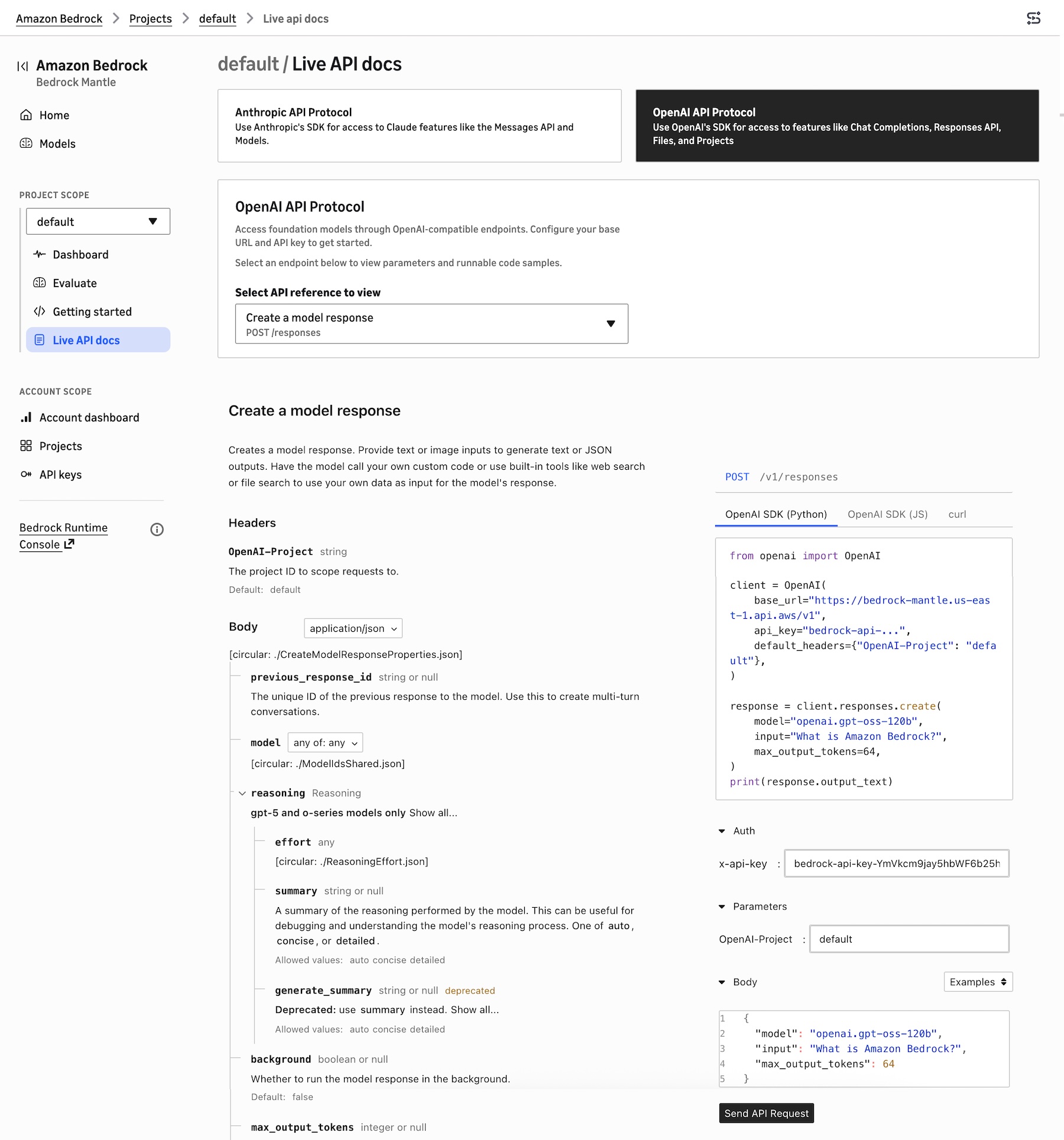

- Amazon Bedrock adds CloudWatch metrics for OpenAI- and Anthropic-compatible APIs — You can now monitor inference traffic to the bedrock-mantle endpoint with CloudWatch metrics, including inference counts, input and output token totals, and client error counts at account, project, model, and project-and-model granularity.

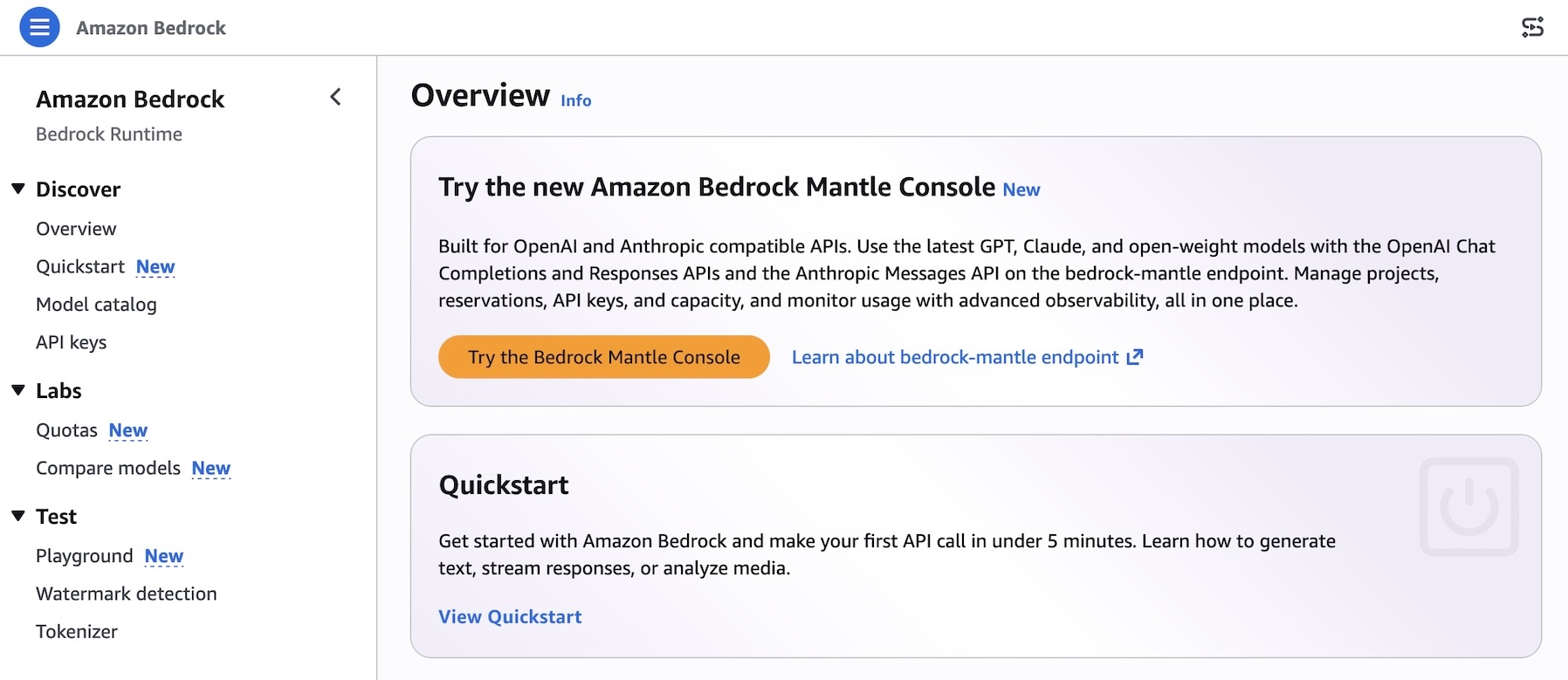

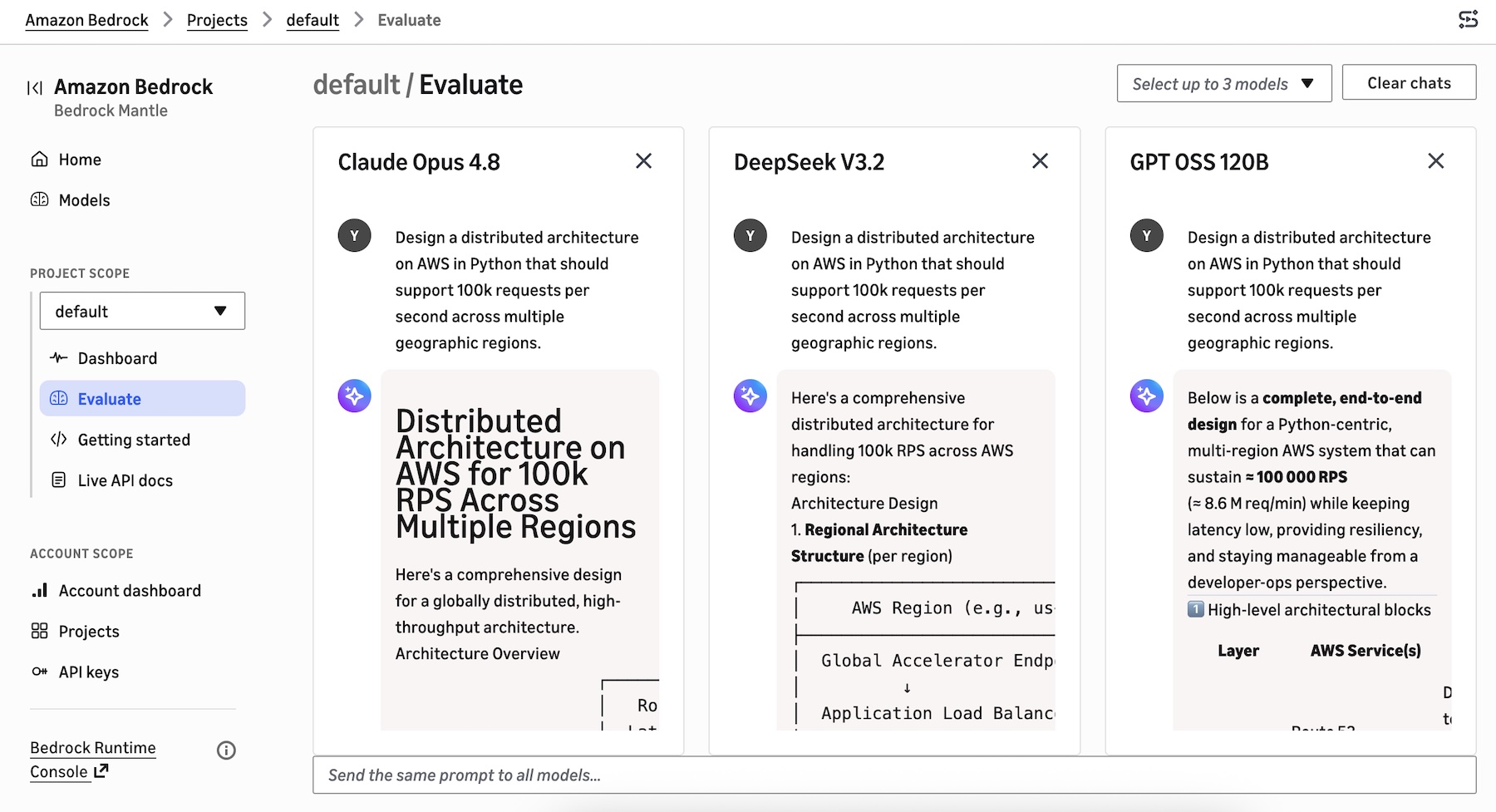

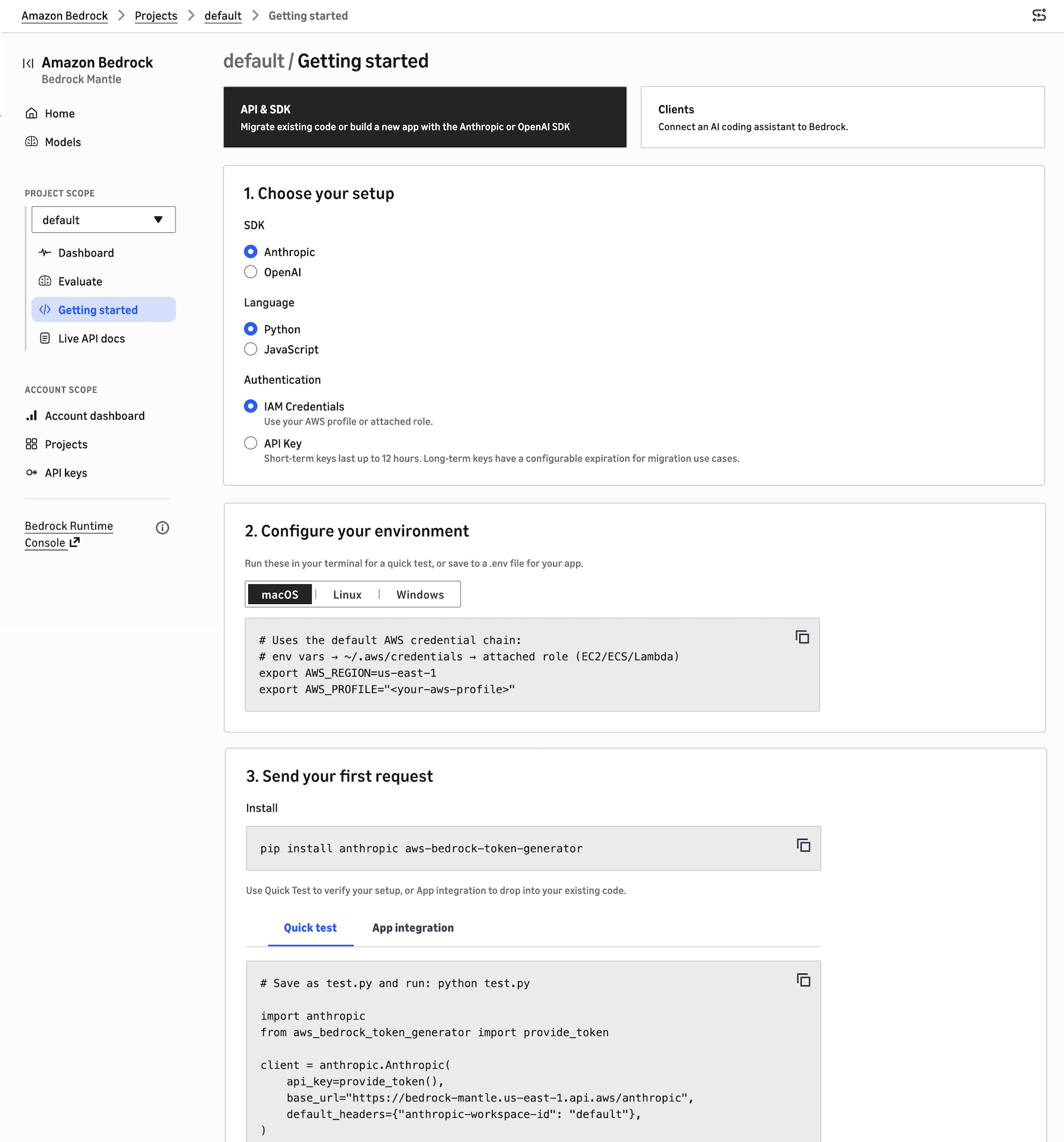

- Amazon Bedrock launches a redesigned console optimized for OpenAI- and Anthropic-compatible APIs — A refreshed console workflow with a model catalog, side-by-side comparison, project-based organization, and project-aware documentation with pre-filled code snippets.

- Amazon Bedrock AgentCore Identity now supports bring-your-own secrets with AWS Secrets Manager — Customers can now reference existing AWS Secrets Manager secret ARNs in AgentCore Identity Credential Providers, enabling full ownership of secrets governance including custom CMKs, tagging strategies, and automatic rotation.

- AWS Step Functions adds AgentCore-powered agentic reasoning step — You can now add AI agent reasoning steps to your Step Functions workflows through an integration with the managed harness in Amazon Bedrock AgentCore. Run multiple agents in parallel or sequence, add human approval, and trace every agent decision.

- Amazon EKS and Amazon EKS Distro now support Kubernetes version 1.36 — Kubernetes 1.36 promotes User Namespaces to GA, introduces Mutating Admission Policies, In-Place Pod-Level Resources Vertical Scaling, and Resource Health Status reporting. Available in all Regions where EKS is available.

- Amazon ECS Managed Instances now supports AWS Trainium and AWS Inferentia — You can now create an ECS Managed Instances capacity provider with Inferentia2, Trainium1, and Trainium2 instance types and have Amazon ECS automatically allocate all accelerator resources to your workload.

- Amazon Quick now supports VPC connectivity for MCP connections — Enterprise customers can now connect privately hosted Model Context Protocol (MCP) servers to Amazon Quick through VPC, enabling secure access to proprietary applications and internal tools without exposing them to the internet.

- AWS Cost and Usage Report 2.0 now supports Athena and Redshift integration — CUR 2.0 exports are automatically delivered in the optimal format for your chosen query engine, with supporting infrastructure templates, table definitions, and data loading instructions.

- Amazon Location Service announces public transit and intermodal routing — The Routes API now supports two new travel modes, Transit and Intermodal, to plan journeys combining public transportation with walking, driving, taxi, and rental segments across 13 Regions.

For a full list of AWS announcements, be sure to keep an eye on the What’s New with AWS page.

Upcoming AWS events

Learn more about AWS, browse and join upcoming AWS-led in-person and virtual events, startup events, and developer-focused events as well as AWS Summits and AWS Community Days. Join the AWS Builder Center to connect with builders, share solutions, and access content that supports your development.

That’s all for this week. Check back next Monday for another Weekly Roundup!

— sebfrom AWS News Blog https://ift.tt/0ozwdZE

via IFTTT

There’s something genuinely energizing about working with startups — something I’ve been doing intensely for more than two years now. Startups operate at a different frequency: the urgency is real, the constraints are tight, and the stakes are personal. Helping them navigate the challenge of proving their business model requires not just technical depth but a willingness to move fast, challenge assumptions, and make bets on the right architecture before the perfect data exists.

There’s something genuinely energizing about working with startups — something I’ve been doing intensely for more than two years now. Startups operate at a different frequency: the urgency is real, the constraints are tight, and the stakes are personal. Helping them navigate the challenge of proving their business model requires not just technical depth but a willingness to move fast, challenge assumptions, and make bets on the right architecture before the perfect data exists.

Just a year ago, we launched

Just a year ago, we launched